Exploring the Impact of New AI Innovations

The Rise of New AI: Transforming the Future

Artificial Intelligence (AI) has been a buzzword for years, but recent advancements have ushered in a new era of AI that is transforming industries and everyday life. From machine learning algorithms to neural networks, the capabilities of AI are expanding at an unprecedented rate.

What is New AI?

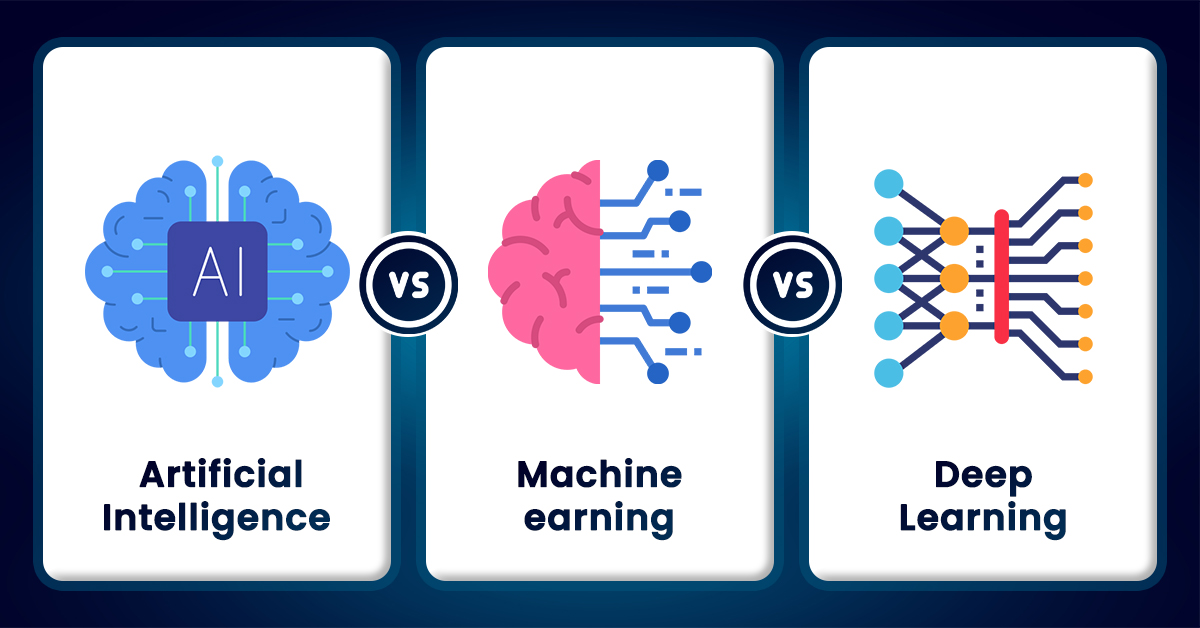

New AI refers to the latest developments in artificial intelligence technology, characterized by more sophisticated algorithms, increased processing power, and enhanced data collection methods. These advancements allow AI systems to perform tasks that were previously thought to be exclusive to human intelligence.

Key Features of New AI

- Deep Learning: Utilizing neural networks with multiple layers, deep learning enables machines to analyze vast amounts of data and recognize patterns with remarkable accuracy.

- Natural Language Processing (NLP): NLP allows computers to understand and respond to human language in a way that is both meaningful and contextually relevant.

- Computer Vision: This technology enables machines to interpret and understand visual information from the world, leading to innovations in areas like autonomous vehicles and facial recognition.

- Reinforcement Learning: By using trial and error methods, reinforcement learning allows AI systems to learn optimal behaviors through interaction with their environment.

The Impact of New AI

The impact of new AI technologies is being felt across various sectors:

Healthcare

In healthcare, AI is revolutionizing diagnostics and treatment plans. Machine learning algorithms can analyze medical images faster and more accurately than traditional methods, leading to early detection of diseases such as cancer. Additionally, personalized medicine is becoming a reality as AI helps tailor treatments based on individual genetic profiles.

Finance

The finance industry benefits from new AI through improved fraud detection systems and automated trading strategies. By analyzing vast datasets in real-time, AI can identify anomalies that may indicate fraudulent activities or predict market trends with greater precision.

Retail

In retail, AI enhances customer experiences through personalized recommendations and chatbots that provide instant support. Inventory management systems powered by AI optimize stock levels based on consumer demand predictions.

The Future of New AI

The future of new AI holds immense potential. As technology continues to advance, we can expect even more innovative applications across various domains. However, this growth also brings challenges such as ethical considerations around data privacy and the need for regulations to ensure responsible use.

The rise of new AI marks an exciting chapter in technological evolution. By harnessing its power responsibly, society can unlock countless opportunities for improvement in quality of life worldwide.

6 Key Benefits of New AI: Boosting Efficiency, Accuracy, and Innovation

Exploring the Challenges of New AI: Job Displacement, Privacy, Bias, Security, Ethics, and Dependency

- Job Displacement

- Data Privacy Concerns

- Bias and Discrimination

- Security Risks

- Ethical Dilemmas

- Dependency on Technology

Enhanced Efficiency

Enhanced efficiency is one of the most significant advantages brought by new AI technologies. By streamlining processes and automating repetitive tasks, AI enables businesses to operate more smoothly and effectively. This automation reduces the time and effort required for manual labor, allowing employees to focus on more strategic and creative aspects of their work. As a result, productivity levels rise, and companies can achieve more in less time. Additionally, AI-driven tools can analyze vast amounts of data quickly, providing valuable insights that further optimize operations and decision-making processes. This increased efficiency not only boosts output but also enhances the overall quality of products and services offered.

Improved Accuracy

One of the significant advantages of new AI is its improved accuracy in data analysis and decision-making. AI systems are capable of processing vast amounts of data with precision, identifying patterns and correlations that might be overlooked by human analysts. This ability allows AI to make informed decisions based on complex datasets, leading to more reliable outcomes in various fields. For instance, in healthcare, AI can enhance diagnostic accuracy by detecting subtle indicators in medical images that a human eye might miss. Similarly, in finance, AI can predict market trends by analyzing historical data and real-time information more efficiently than traditional methods. This level of accuracy not only improves performance but also helps organizations make strategic decisions with greater confidence.

Personalization

New AI technologies have revolutionized the way personalized experiences are delivered to users by leveraging advanced algorithms that understand individual preferences and behaviors. By analyzing vast amounts of data, AI can tailor content, recommendations, and services to meet the unique needs of each user. This level of personalization enhances user engagement and satisfaction, whether it’s through customized product suggestions in e-commerce, personalized playlists in music streaming services, or tailored learning paths in educational platforms. As a result, users receive more relevant and meaningful interactions, fostering a deeper connection with the technology they use every day.

Cost Savings

One of the significant advantages of new AI technologies is their potential for cost savings across various industries. By optimizing operations, AI systems can streamline processes, leading to increased efficiency and reduced waste. These technologies can also minimize human error by providing accurate data analysis and predictive insights, which helps businesses avoid costly mistakes. Furthermore, AI-driven automation reduces the need for manual labor in repetitive tasks, allowing companies to allocate resources more effectively. In the long run, these improvements not only enhance productivity but also contribute to substantial financial savings, making AI an invaluable asset for businesses looking to maintain a competitive edge.

Innovation

The continuous development of new AI technologies serves as a catalyst for innovation across various industries, driving the creation of groundbreaking solutions and advancements. By leveraging sophisticated algorithms and enhanced data processing capabilities, AI is enabling businesses to tackle complex challenges in ways previously unimaginable. This wave of innovation is not only improving efficiency and productivity but also opening up new possibilities for products and services that enhance everyday life. From healthcare to finance, education to entertainment, the transformative power of AI is fostering an environment where creativity thrives, pushing boundaries and setting new standards for what can be achieved in the digital age.

Scalability

One of the significant advantages of new AI is its scalability, allowing systems to effortlessly handle large volumes of data or tasks. This adaptability is crucial for businesses and organizations facing ever-growing demands and data influxes. With scalable AI solutions, companies can efficiently process and analyze vast datasets without compromising performance or accuracy. This capability ensures that as a business expands, its AI infrastructure can grow alongside it, maintaining seamless operations and enabling real-time decision-making. As a result, scalable AI not only enhances productivity but also provides the flexibility needed to adapt to changing market dynamics and customer needs.

Job Displacement

The rapid advancement of AI automation presents a significant challenge in the form of job displacement. As AI systems become capable of performing tasks that were traditionally carried out by humans, there is a growing concern about the potential loss of employment opportunities for certain segments of the workforce. Industries such as manufacturing, customer service, and transportation are particularly vulnerable, as repetitive and routine tasks are increasingly being automated. This shift could lead to reduced demand for certain job roles, leaving many workers facing unemployment or the need to reskill in order to adapt to the changing job market. While AI has the potential to create new jobs and boost productivity, the transition period may pose difficulties for those whose skills do not align with emerging technological demands.

Data Privacy Concerns

The rapid advancement of AI technology brings with it significant data privacy concerns, as the effectiveness of AI systems often hinges on the collection and analysis of vast amounts of personal data. This raises critical questions about how such data is stored, shared, and protected. There is a risk that sensitive information could be accessed by unauthorized parties or used for purposes beyond the original intent, leading to potential misuse. Moreover, individuals may not always be fully aware of what data is being collected and how it is being utilized, which can undermine trust in AI technologies. As AI continues to integrate into various aspects of life, ensuring robust data protection measures and transparent practices becomes essential to safeguarding individual privacy rights.

Bias and Discrimination

One of the significant concerns with new AI technologies is the potential for bias and discrimination. AI algorithms are often trained on large datasets that may contain historical biases, whether intentional or not. If these biases are not identified and corrected, they can be perpetuated and even amplified by the AI systems, leading to unfair or discriminatory outcomes in decision-making processes. For instance, in areas such as hiring, lending, and law enforcement, biased algorithms can disproportionately disadvantage certain groups based on race, gender, or socioeconomic status. This issue highlights the importance of ensuring that AI systems are developed with fairness and transparency in mind and underscores the need for ongoing scrutiny and refinement of the data used to train these models.

Security Risks

As AI technology becomes increasingly sophisticated, it introduces significant security risks that cannot be overlooked. One major concern is the potential for cyberattacks targeting AI systems themselves. Hackers could exploit vulnerabilities in AI algorithms or data inputs to manipulate outcomes, leading to harmful consequences. Additionally, there is the risk of AI being used for malicious purposes, such as creating deepfakes or automating large-scale phishing attacks. These scenarios highlight the urgent need for robust security measures and regulatory frameworks to protect against the misuse of AI and ensure that its development prioritizes safety and ethical considerations.

Ethical Dilemmas

The rapid advancement of new AI technologies brings with it significant ethical dilemmas, particularly concerning accountability, transparency, and fairness. As intelligent systems increasingly influence critical aspects of daily life, from healthcare decisions to criminal justice outcomes, the question of who is responsible when these systems fail becomes paramount. Furthermore, the opacity of complex algorithms often makes it challenging to understand how decisions are made, raising concerns about transparency and the potential for bias. This lack of clarity can lead to unfair treatment or discrimination against certain groups, undermining trust in AI applications. Addressing these ethical challenges requires robust frameworks and regulations to ensure that AI systems are developed and deployed responsibly, with a focus on protecting individual rights and promoting societal well-being.

Dependency on Technology

The increasing dependency on AI technology poses a significant concern as it can lead to diminished critical thinking skills and human judgment. When individuals and organizations become overly reliant on AI solutions for decision-making, there is a risk of losing essential problem-solving abilities and the capacity for independent thought. This over-reliance may result in a loss of autonomy, where people defer too readily to automated systems without questioning their outputs or considering alternative perspectives. Consequently, this could lead to scenarios where human control is compromised, and decisions are made without the nuanced understanding that only human insight can provide. As AI continues to integrate into various aspects of life, it is crucial to maintain a balance that preserves human agency and the ability to think critically.