Revolutionizing Industries: The Impact of AI and Robotics

AI and Robotics: Transforming the Future

The fields of artificial intelligence (AI) and robotics are rapidly evolving, transforming industries, economies, and everyday life. As these technologies advance, they offer unprecedented opportunities for innovation and efficiency.

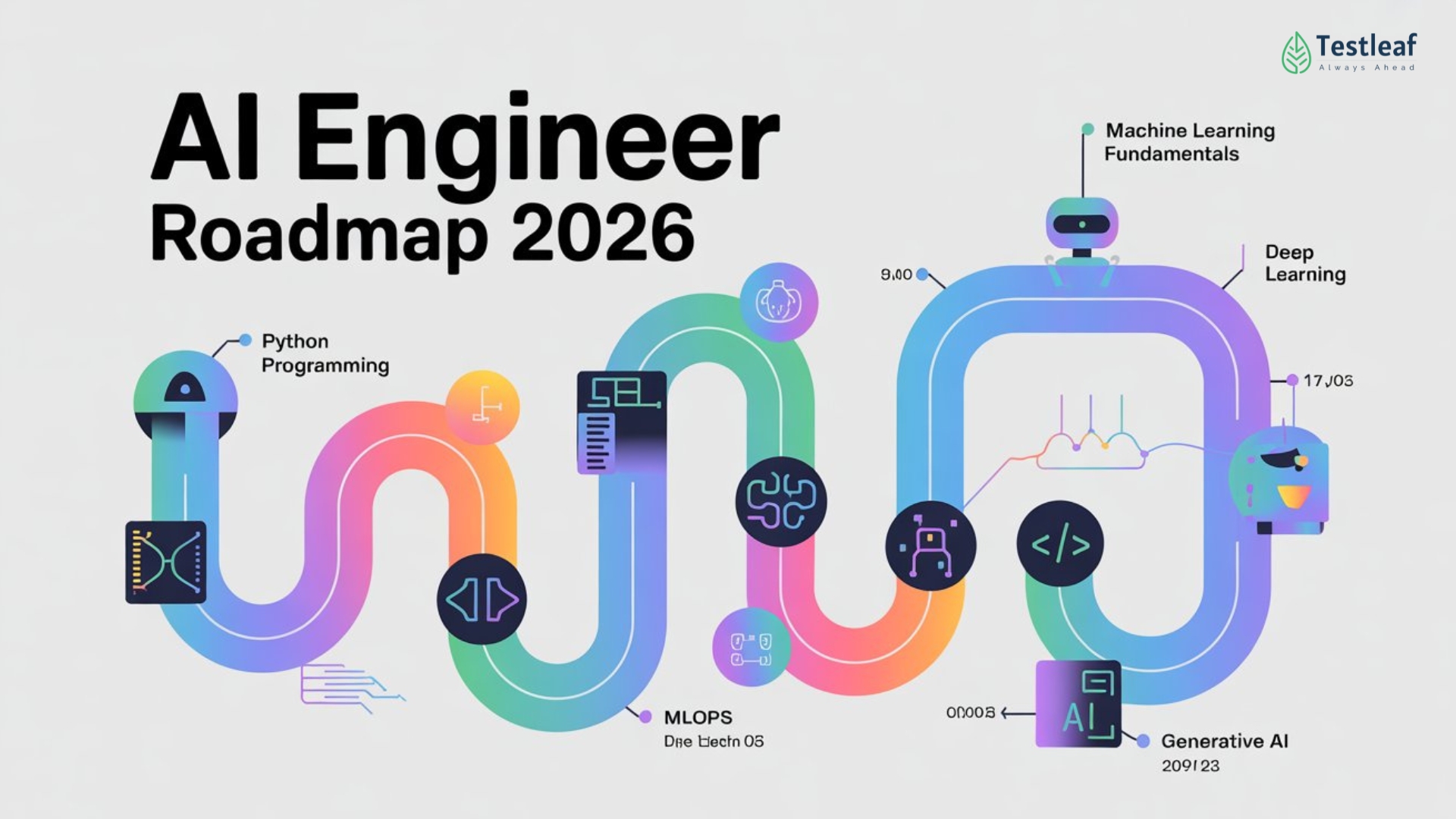

Understanding AI and Robotics

Artificial Intelligence (AI) refers to the simulation of human intelligence processes by machines, especially computer systems. These processes include learning (the acquisition of information and rules for using it), reasoning (using rules to reach approximate or definite conclusions), and self-correction.

Robotics, on the other hand, involves the design, construction, operation, and use of robots. Robots are machines capable of carrying out a complex series of actions automatically, especially those programmable by a computer.

The Intersection of AI and Robotics

The integration of AI into robotics has led to significant advancements in how robots perceive their environment, make decisions, and perform tasks. This synergy is creating robots that can learn from experiences, adapt to new situations, and execute tasks with precision.

- Autonomous Vehicles: Self-driving cars are one of the most visible examples of AI-powered robotics. These vehicles use sensors and AI algorithms to navigate roads safely.

- Healthcare: Robots equipped with AI are assisting in surgeries with high precision or providing care in hospitals by delivering medications or conducting routine checks.

- Manufacturing: In factories worldwide, robotic arms powered by AI are optimizing production lines by improving speed and reducing errors.

The Benefits of AI-Driven Robotics

The combination of AI and robotics offers numerous benefits:

- Increased Efficiency: Robots can work tirelessly without breaks or errors that human workers might encounter over long shifts.

- Enhanced Safety: In hazardous environments such as mining or deep-sea exploration, robots can perform dangerous tasks while keeping humans safe.

- Evolving Capabilities: As machine learning algorithms improve over time, robotic systems become more adept at handling complex tasks previously thought impossible for machines.

The Challenges Ahead

Despite their potential benefits, integrating AI with robotics also presents challenges:

- Ethical Concerns: The rise of intelligent machines raises questions about job displacement and privacy issues. Ensuring ethical guidelines govern their deployment is crucial.

- Sophisticated Decision-Making: Developing algorithms that allow robots to make nuanced decisions similar to humans remains a complex challenge for researchers.

- Cultural Acceptance: Societal acceptance varies globally; therefore public education on these technologies’ benefits is essential for widespread adoption.

The Future Outlook

The future holds exciting possibilities as AI continues merging with robotics technology across various sectors. From smart homes filled with robotic assistants managing daily chores efficiently to advanced medical procedures performed autonomously – this evolution promises transformative changes ahead!

Navigating this landscape requires collaboration between technologists policymakers businesses academia ensuring responsible development deployment these powerful tools shaping tomorrow’s world today!

6 Essential Tips for Navigating the World of AI and Robotics

- Stay updated on the latest advancements in AI and robotics.

- Understand the ethical implications of AI and robotics technology.

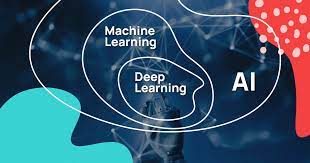

- Learn programming languages commonly used in AI and robotics, such as Python and C++.

- Collaborate with experts in the field to enhance your knowledge and skills.

- Experiment with building simple AI or robotic projects to gain hands-on experience.

- Always prioritize safety measures when working with AI and robotic systems.

Stay updated on the latest advancements in AI and robotics.

Staying updated on the latest advancements in AI and robotics is crucial for anyone interested in technology, as these fields are rapidly evolving and reshaping industries worldwide. By keeping abreast of new developments, individuals can better understand how these technologies can be applied to solve real-world problems and improve efficiency across various sectors. Continuous learning through reputable sources like academic journals, industry conferences, and online courses ensures that one remains informed about cutting-edge innovations. This knowledge not only enhances personal expertise but also provides a competitive edge in the job market, where demand for skills related to AI and robotics is increasing.

Understand the ethical implications of AI and robotics technology.

Understanding the ethical implications of AI and robotics technology is crucial as these innovations become increasingly integrated into society. As AI systems and robots gain more autonomy and decision-making power, they raise important questions about privacy, accountability, and fairness. For instance, the deployment of AI in surveillance systems can enhance security but also risks infringing on individual privacy rights. Similarly, autonomous robots in industries like healthcare or transportation must be programmed to make ethical decisions that prioritize human safety. Ensuring that these technologies are developed and used responsibly requires a collaborative effort among technologists, ethicists, policymakers, and the public to establish guidelines that protect human values while fostering innovation.

Learn programming languages commonly used in AI and robotics, such as Python and C++.

Learning programming languages like Python and C++ is essential for anyone interested in AI and robotics. Python is favored for its simplicity and readability, making it an excellent choice for beginners and experts alike. It offers extensive libraries such as TensorFlow and PyTorch, which are crucial for developing AI models. On the other hand, C++ is renowned for its performance and efficiency, making it ideal for robotics applications where speed is critical. By mastering these languages, individuals can unlock the potential to develop sophisticated algorithms, control robotic systems, and contribute to cutting-edge innovations in the field of AI and robotics.

Collaborate with experts in the field to enhance your knowledge and skills.

Collaborating with experts in the field of AI and robotics is an invaluable strategy for enhancing your knowledge and skills. By working alongside seasoned professionals, you gain access to a wealth of experience and insights that can accelerate your learning curve. Experts can provide guidance on navigating complex challenges, introduce you to cutting-edge technologies, and offer practical advice based on real-world applications. This collaboration fosters a dynamic exchange of ideas, encouraging innovation and creativity. Additionally, building a network of knowledgeable contacts in the industry can open up opportunities for further education, research partnerships, and career advancement. Engaging with experts not only deepens your understanding but also positions you at the forefront of advancements in AI and robotics.

Experiment with building simple AI or robotic projects to gain hands-on experience.

Experimenting with building simple AI or robotic projects is a fantastic way to gain hands-on experience and deepen your understanding of these cutting-edge technologies. By starting with small, manageable projects, you can learn the fundamentals of programming, electronics, and machine learning in a practical context. This approach allows you to see firsthand how AI algorithms work and how robots can be programmed to perform specific tasks. Whether it’s creating a basic chatbot or assembling a simple robotic arm, these projects provide valuable insights into the challenges and possibilities within the field. Additionally, such experimentation fosters problem-solving skills and creativity, equipping you with the knowledge needed to tackle more complex projects in the future.

Always prioritize safety measures when working with AI and robotic systems.

When working with AI and robotic systems, prioritizing safety measures is essential to ensure the well-being of both humans and machines. These advanced technologies, while offering significant benefits, can pose risks if not properly managed. Implementing robust safety protocols helps prevent accidents and malfunctions that could lead to injury or damage. This includes regular maintenance checks, employing fail-safes, and ensuring that all personnel are adequately trained to interact with these systems. By focusing on safety, organizations can mitigate potential hazards and create a secure environment where AI and robotics can thrive alongside human workers.