Exploring the Synergy of Artificial Intelligence and Machine Learning

Understanding Artificial Intelligence and Machine Learning

Artificial Intelligence (AI) and Machine Learning (ML) are two of the most transformative technologies in today’s digital age. They are reshaping industries, enhancing efficiencies, and paving the way for innovations that were once considered science fiction.

What is Artificial Intelligence?

Artificial Intelligence refers to the simulation of human intelligence processes by machines, especially computer systems. These processes include learning (the acquisition of information and rules for using it), reasoning (using rules to reach approximate or definite conclusions), and self-correction. AI can be categorized into two types:

- Narrow AI: Also known as weak AI, this type is designed to perform a narrow task (like facial recognition or internet searches) and operates under a limited set of constraints.

- General AI: Also known as strong AI, this type possesses the ability to perform any intellectual task that a human can do. It remains largely theoretical at this point.

The Role of Machine Learning

Machine Learning is a subset of AI that involves the use of algorithms and statistical models to enable computers to improve their performance on tasks through experience. Instead of being explicitly programmed to carry out a specific task, ML systems use data-driven approaches to learn patterns from data.

Types of Machine Learning

- Supervised Learning: The algorithm is trained on labeled data. It’s like teaching a child with examples. For instance, teaching an algorithm to recognize cats by showing it thousands of labeled cat images.

- Unsupervised Learning: The algorithm works with unlabeled data and tries to identify patterns or groupings without any guidance. Clustering algorithms fall under this category.

- Semi-supervised Learning: This approach uses both labeled and unlabeled data for training. It’s useful when acquiring a fully labeled dataset is expensive or time-consuming.

- Reinforcement Learning: Here, an agent learns how to achieve a goal in an uncertain environment by taking actions and receiving feedback from those actions in terms of rewards or penalties.

The Impact on Industries

The implications of AI and ML are vast across various sectors:

- Healthcare: From predictive analytics for patient care to advanced imaging techniques, AI helps in diagnosing diseases faster and more accurately.

- Finance: Algorithms detect fraudulent transactions in real-time while personalized banking experiences enhance customer satisfaction.

- Agriculture: Precision farming powered by AI optimizes crop yield predictions based on weather conditions, soil health, etc.

- E-commerce: Personalized recommendations improve user experience while inventory management becomes more efficient through demand forecasting.

The Future Ahead

The future holds immense potential for further advancements in artificial intelligence and machine learning. As these technologies evolve, ethical considerations around privacy, security, bias, and employment must be addressed responsibly. Collaboration between policymakers, technologists, businesses, and society will be crucial in harnessing these powerful tools for the greater good.

The journey ahead is exciting as we continue exploring the possibilities that artificial intelligence and machine learning offer in transforming our world into a smarter place.

Understanding AI and Machine Learning: Key Questions Answered

- What is artificial intelligence?

- How does machine learning differ from artificial intelligence?

- What are the real-world applications of artificial intelligence and machine learning?

- What are the different types of machine learning algorithms?

- How do artificial intelligence and machine learning impact job roles and industries?

- What ethical considerations are associated with the use of AI and ML technologies?

- How can businesses leverage artificial intelligence and machine learning to gain a competitive advantage?

What is artificial intelligence?

Artificial Intelligence (AI) is a branch of computer science focused on creating systems capable of performing tasks that typically require human intelligence. These tasks include understanding natural language, recognizing patterns, solving problems, and making decisions. AI systems are designed to simulate human cognitive processes by learning from data and adapting to new inputs. They can be categorized into narrow AI, which is specialized for specific tasks like facial recognition or voice assistants, and general AI, which remains largely theoretical and would possess the ability to perform any intellectual task a human can do. The development of AI has profound implications across various sectors, from healthcare to finance, as it enhances efficiency and opens up new possibilities for innovation.

How does machine learning differ from artificial intelligence?

Machine learning is a subset of artificial intelligence that focuses on enabling machines to learn from data without being explicitly programmed. While artificial intelligence encompasses a broader concept of simulating human intelligence in machines, machine learning specifically deals with algorithms that improve their performance over time through experience. In essence, artificial intelligence represents the overarching goal of creating intelligent machines, whereas machine learning serves as a key technique within the field of AI, emphasizing the ability of systems to learn and adapt autonomously.

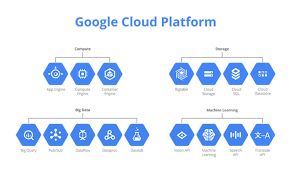

What are the real-world applications of artificial intelligence and machine learning?

Artificial intelligence and machine learning have numerous real-world applications that are transforming industries and enhancing everyday life. In healthcare, AI is used for predictive analytics, improving diagnostic accuracy, and personalizing patient care plans. In finance, algorithms help detect fraudulent activities and automate trading processes. The automotive industry benefits from AI through the development of autonomous vehicles and advanced driver-assistance systems. In retail, machine learning enhances customer experience by enabling personalized recommendations and optimizing inventory management. Additionally, AI-powered chatbots are revolutionizing customer service across various sectors by providing instant support and handling routine inquiries efficiently. These technologies are also making significant strides in agriculture with precision farming techniques that increase crop yields while minimizing resource usage. Overall, AI and machine learning are driving innovation across multiple domains, making processes more efficient and opening up new possibilities for growth and development.

What are the different types of machine learning algorithms?

Machine learning algorithms can be broadly categorized into several types, each serving different purposes depending on the nature of the task and the data available. The main types include supervised learning, where algorithms are trained on labeled data to make predictions or classifications; unsupervised learning, which involves finding patterns or groupings in data without predefined labels; and reinforcement learning, where an agent learns to make decisions by receiving feedback in the form of rewards or penalties from its environment. Additionally, there is semi-supervised learning that combines both labeled and unlabeled data to improve learning accuracy, and deep learning, a subset of machine learning that utilizes neural networks with many layers to model complex patterns in large datasets. Each type has its own strengths and is selected based on the specific problem being addressed.

How do artificial intelligence and machine learning impact job roles and industries?

Artificial intelligence and machine learning are significantly transforming job roles and industries by automating routine tasks, enhancing decision-making processes, and creating new opportunities. In many sectors, AI and ML technologies streamline operations, allowing employees to focus on more strategic and creative tasks rather than repetitive ones. For instance, in manufacturing, AI-driven robots handle assembly lines with precision, reducing the need for manual labor while increasing productivity. In finance, algorithms analyze vast datasets to detect fraud or predict market trends faster than human analysts could. While some fear that automation might lead to job displacement, these technologies also generate demand for new roles in AI development, data analysis, and system maintenance. Industries are evolving as they integrate AI and ML into their workflows, fostering innovation and requiring a workforce skilled in digital literacy to adapt to these technological advancements.

What ethical considerations are associated with the use of AI and ML technologies?

The ethical considerations associated with the use of AI and ML technologies are multifaceted and crucial to address as these technologies become more integrated into daily life. One major concern is privacy, as AI systems often rely on large datasets that include personal information, raising questions about data security and consent. Additionally, there is the potential for bias in AI algorithms, which can lead to unfair or discriminatory outcomes if the data used to train these systems is not representative or contains inherent biases. Transparency is another significant issue, as the decision-making processes of complex AI models can be opaque, making it difficult for users to understand how conclusions are reached. Moreover, the impact of AI on employment must be considered, as automation could displace jobs without adequate measures for workforce transition. Finally, ensuring accountability in AI systems is essential to determine who is responsible when these technologies fail or cause harm. Addressing these ethical challenges requires a collaborative effort among technologists, policymakers, and society to develop frameworks that promote fair and responsible use of AI and ML.

How can businesses leverage artificial intelligence and machine learning to gain a competitive advantage?

Businesses can leverage artificial intelligence (AI) and machine learning (ML) to gain a competitive advantage by optimizing operations, enhancing customer experiences, and driving innovation. AI and ML can automate routine tasks, freeing up valuable human resources for more strategic activities. By analyzing vast amounts of data, these technologies provide insights into consumer behavior, allowing companies to tailor products and services to meet customer needs more effectively. Predictive analytics powered by AI can improve decision-making processes, enabling businesses to anticipate market trends and adjust strategies proactively. Additionally, AI-driven tools can enhance product development cycles by identifying inefficiencies and suggesting improvements. By integrating AI and ML into their operations, businesses not only increase efficiency but also foster innovation that sets them apart in the marketplace.