Understanding the Role of a Java Code Compiler in Software Development

The Role of a Java Code Compiler

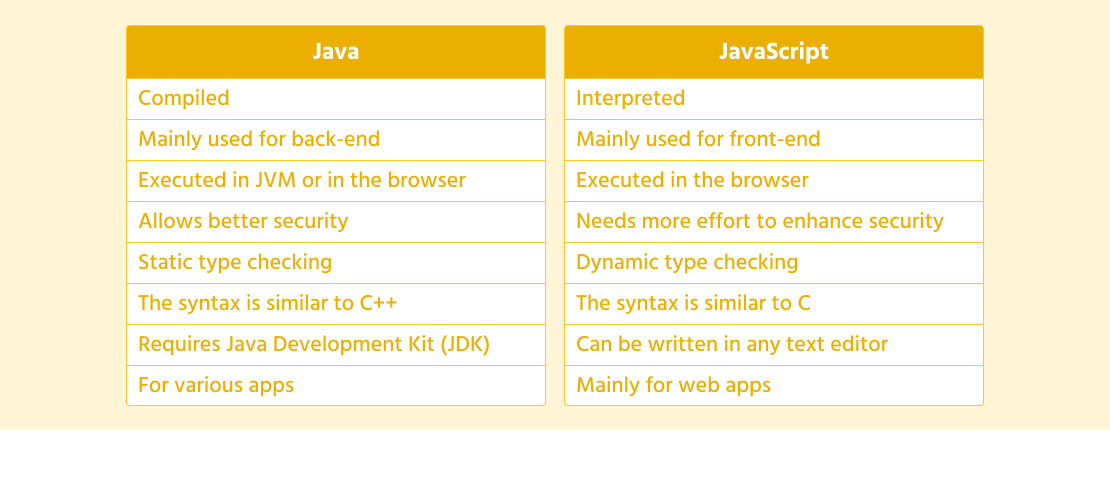

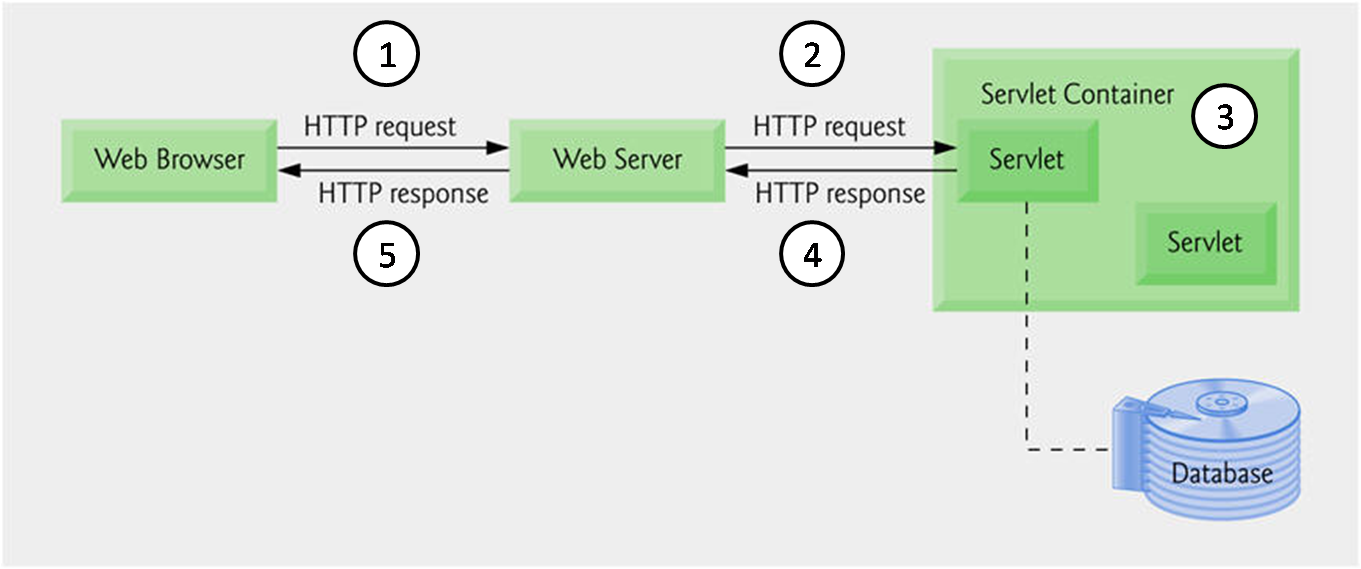

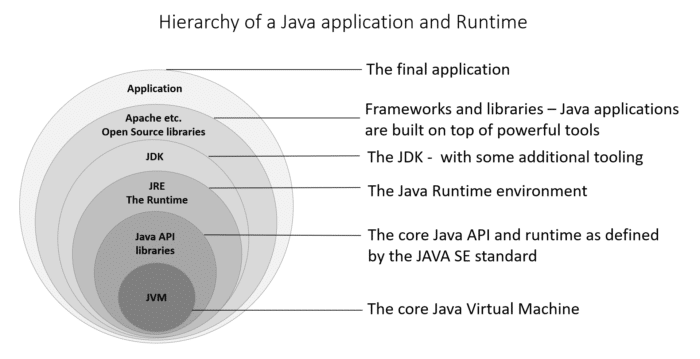

Java, a popular programming language used for developing a wide range of applications, relies on a crucial component known as the Java code compiler. This tool plays a vital role in the software development process by translating human-readable Java code into machine-readable instructions that can be executed by the Java Virtual Machine (JVM).

How Does a Java Code Compiler Work?

When a developer writes Java code, they use a text editor to create files with the “.java” extension. These files contain the source code that defines the logic and behavior of the application. However, computers cannot directly understand this source code; it needs to be converted into bytecode, which is a set of instructions that the JVM can interpret.

This is where the Java code compiler comes into play. The compiler reads the source code written by the developer and checks it for syntax errors and other issues. If no errors are found, the compiler translates the source code into bytecode files with a “.class” extension.

Benefits of Using a Java Code Compiler

Using a Java code compiler offers several advantages to developers:

- Error Detection: The compiler helps identify syntax errors and other issues in the code before it is executed.

- Efficient Execution: By converting source code into bytecode, the compiler optimizes performance and ensures efficient execution on different platforms.

- Platform Independence: The bytecode generated by the compiler can run on any system with a JVM installed, making Java applications platform-independent.

- Enhanced Security: Compiling code adds an extra layer of security by preventing direct access to the source code.

In Conclusion

The Java code compiler is an essential tool in the development of Java applications. It simplifies the process of converting human-readable source code into machine-readable bytecode, enabling developers to create robust and efficient software solutions. By leveraging the capabilities of a reliable compiler, developers can streamline their development workflow and build high-quality applications that meet user requirements.

Understanding Java Code Compilers: FAQs and Resources

- What is a Java code compiler?

- How does a Java code compiler work?

- What are the benefits of using a Java code compiler?

- Which tools are commonly used as Java code compilers?

- Can you recommend any resources for learning more about Java code compilation?

What is a Java code compiler?

A Java code compiler is a fundamental component in the Java programming language that translates human-readable Java source code into machine-readable bytecode. It plays a crucial role in the software development process by checking the syntax of the code, detecting errors, and converting it into executable instructions for the Java Virtual Machine (JVM). The compiler ensures that the code is correctly structured and free of mistakes before it is executed, ultimately facilitating the creation of robust and efficient Java applications across different platforms.

How does a Java code compiler work?

When exploring the question “How does a Java code compiler work?” it’s important to understand that the Java code compiler plays a crucial role in software development. Essentially, the Java compiler reads the human-readable Java source code written by developers and translates it into bytecode, which is a set of instructions that the Java Virtual Machine (JVM) can execute. This process involves checking the syntax of the code for errors and converting it into a format that can be understood and executed by computers. By performing this translation, the Java code compiler facilitates efficient execution of Java applications on various platforms while also providing error detection and optimization benefits to developers.

What are the benefits of using a Java code compiler?

When considering the benefits of using a Java code compiler, developers can appreciate its crucial role in the software development process. The compiler aids in error detection by identifying syntax issues and other errors before execution, ensuring a smoother coding experience. Additionally, it optimizes performance by converting source code into bytecode, making Java applications efficient and platform-independent. The enhanced security provided by compiling code adds an extra layer of protection to intellectual property, making it a valuable tool for developers seeking reliable and secure software solutions.

Which tools are commonly used as Java code compilers?

In the realm of Java programming, several tools are commonly utilized as Java code compilers to translate source code into bytecode for execution by the Java Virtual Machine (JVM). Some popular choices among developers include the official Java Compiler (javac), Eclipse Compiler for Java (ECJ) integrated within the Eclipse IDE, Apache Ant with its built-in compiler tasks, and JetBrains’ IntelliJ IDEA compiler. These tools offer various features and functionalities to aid in error detection, optimization, and platform independence, empowering developers to efficiently compile their Java code and build robust applications.

Can you recommend any resources for learning more about Java code compilation?

For individuals seeking to deepen their understanding of Java code compilation, there are numerous valuable resources available that can enhance their knowledge and skills in this area. Online platforms like Java documentation, tutorials on reputable websites such as Oracle, JetBrains Academy, or Codecademy, and books like “Effective Java” by Joshua Bloch provide comprehensive insights into the intricacies of Java code compilation. Additionally, participating in online forums and communities dedicated to Java programming can offer practical advice and real-world experiences from seasoned developers. By exploring these resources, aspiring programmers can gain a solid foundation in Java code compilation and advance their proficiency in software development.