Exploring the Future of Symbolic AI: Bridging Logic and Learning

Understanding Symbolic AI

Symbolic AI, often referred to as “Good Old-Fashioned Artificial Intelligence” (GOFAI), is a branch of artificial intelligence that focuses on the use of high-level symbolic representations to enable machines to perform reasoning and problem-solving tasks. Unlike other forms of AI that rely heavily on data-driven approaches, such as neural networks in machine learning, symbolic AI emphasizes the use of explicit rules and logic.

The Foundations of Symbolic AI

The core idea behind symbolic AI is to represent human knowledge in a way that computers can process and manipulate. This involves using symbols to represent various concepts and employing logical rules to manipulate these symbols. The approach is based on the notion that human intelligence can be modeled through the manipulation of symbols.

Key Components

- Knowledge Representation: This involves encoding information about the world in a form that a computer system can utilize to solve complex tasks. Common methods include semantic networks, frames, and ontologies.

- Inference Engines: These are systems designed to apply logical rules to the knowledge base in order to derive new information or make decisions.

- Rule-Based Systems: These systems use sets of “if-then” rules for decision-making processes. They are particularly useful in expert systems where domain-specific knowledge is crucial.

Applications of Symbolic AI

Symbolic AI has been successfully applied in various domains where structured reasoning and expert knowledge are essential:

- Expert Systems: These systems emulate the decision-making ability of a human expert. They have been used extensively in areas such as medical diagnosis, financial services, and technical support.

- NLP (Natural Language Processing): Early NLP systems relied heavily on symbolic approaches for understanding syntax and semantics.

- Theorem Proving: Symbolic AI techniques are used in automated theorem proving where logical proofs are generated by machines.

The Evolution and Challenges

While symbolic AI was dominant during the early years of artificial intelligence research, it faced significant challenges due to its limitations in handling uncertainty and learning from data. This led to the rise of machine learning techniques which excel at pattern recognition and learning from large datasets.

The main challenges faced by symbolic AI include:

- Lack of Learning Capability: Traditional symbolic systems do not learn from experience or adapt over time without manual intervention.

- Difficulties with Uncertainty: Handling ambiguous or uncertain information is challenging for rule-based systems without probabilistic reasoning extensions.

The Future of Symbolic AI

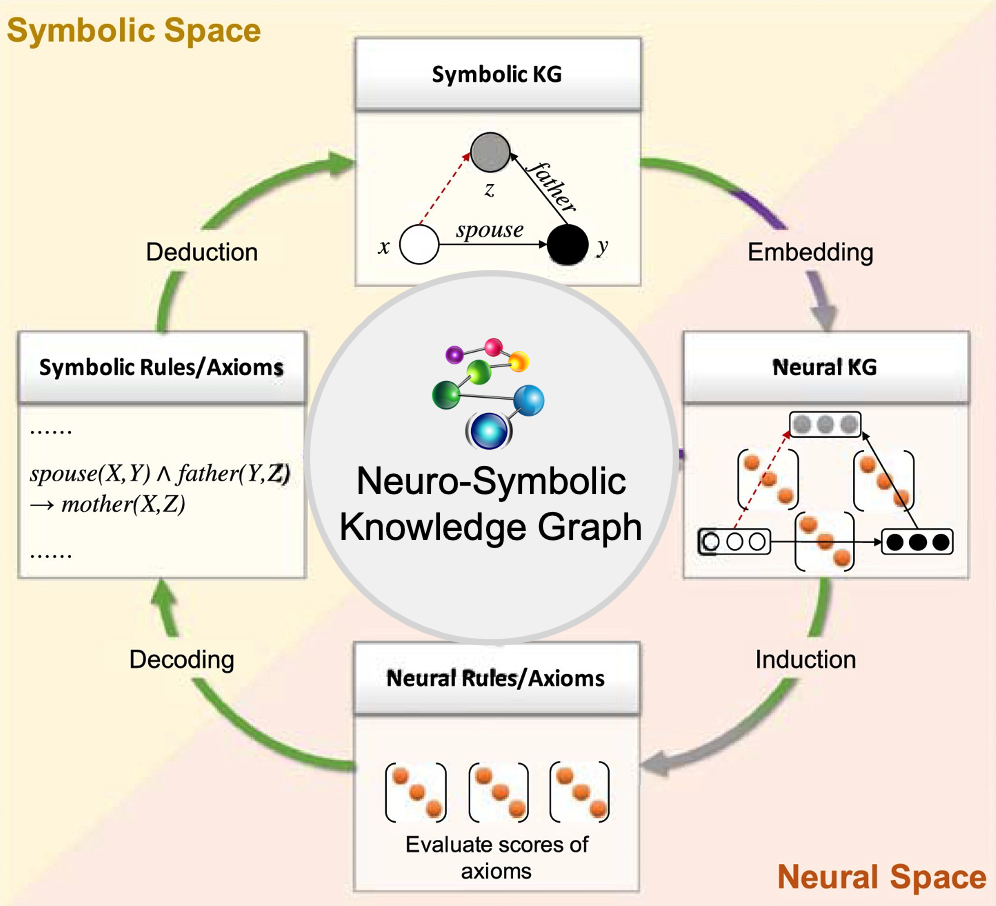

Todays’ advancements in hybrid models aim to combine the strengths of both symbolic and sub-symbolic (e.g., machine learning) approaches. By integrating explicit reasoning with data-driven insights, researchers hope to create more robust AI systems capable of complex decision-making while maintaining interpretability.

This synergy could lead to breakthroughs across various fields by leveraging structured knowledge representations alongside adaptive learning capabilities offered by modern machine learning techniques.

Mastering Symbolic AI: 7 Essential Tips for Navigating Knowledge Representation, Expert Systems, and Hybrid Approaches

- Understand the principles of symbolic AI, which focuses on manipulating symbols to perform tasks.

- Learn about knowledge representation techniques used in symbolic AI, such as logic and rules.

- Explore expert systems, a type of symbolic AI that emulates human expertise in a specific domain.

- Understand how symbolic AI can handle reasoning and inference to make decisions.

- Study natural language processing in the context of symbolic AI for understanding and generating human language.

- Be aware of the limitations of symbolic AI, such as scalability issues with complex domains.

- Stay updated on advancements in hybrid approaches that combine symbolic AI with other AI techniques like machine learning.

Understand the principles of symbolic AI, which focuses on manipulating symbols to perform tasks.

Symbolic AI is a fascinating area of artificial intelligence that emphasizes the manipulation of symbols to perform complex tasks. At its core, symbolic AI relies on representing knowledge through symbols and applying logical rules to these representations to derive conclusions or solve problems. This approach mimics human reasoning by using explicit, interpretable rules and structured data, allowing for precise decision-making and problem-solving in well-defined domains. Understanding the principles of symbolic AI involves grasping how these symbolic systems are constructed and how they can be used to model intelligent behavior. This involves learning about knowledge representation techniques, such as semantic networks or ontologies, and understanding how inference engines apply logical operations to manipulate symbols effectively. By mastering these principles, one can appreciate how symbolic AI contributes to fields like expert systems and natural language processing, where clear logic and reasoning are crucial.

Learn about knowledge representation techniques used in symbolic AI, such as logic and rules.

Understanding knowledge representation techniques is crucial when diving into symbolic AI, as these techniques form the backbone of how machines interpret and manipulate information. Symbolic AI relies on explicit representations of knowledge, often using logic-based structures and rule-based systems to simulate human reasoning. By learning about various methods such as propositional and predicate logic, semantic networks, frames, and ontologies, one can grasp how symbolic AI systems organize complex information into understandable formats. These techniques enable computers to perform tasks like problem-solving and decision-making by applying logical rules to the structured data. Mastery of knowledge representation not only aids in building more sophisticated AI systems but also enhances one’s ability to create interpretable models that can explain their reasoning processes clearly.

Explore expert systems, a type of symbolic AI that emulates human expertise in a specific domain.

Expert systems are a fascinating application of symbolic AI, designed to replicate the decision-making abilities of a human expert within a specific domain. These systems utilize a knowledge base composed of facts and rules, often represented in “if-then” statements, to solve complex problems that typically require specialized human expertise. By processing this structured information through an inference engine, expert systems can provide solutions, recommendations, or diagnoses similar to those a human expert might offer. They have been successfully implemented in various fields such as medical diagnosis, financial analysis, and technical support, where accurate and reliable decision-making is crucial. As a type of symbolic AI, expert systems highlight the power of rule-based reasoning and structured knowledge representation in mimicking human cognitive processes.

Understand how symbolic AI can handle reasoning and inference to make decisions.

Symbolic AI excels in handling reasoning and inference, which are critical for making informed decisions. By utilizing structured representations of knowledge, such as rules and logic, symbolic AI systems can process complex information and draw logical conclusions. This capability allows these systems to simulate human-like reasoning by applying predefined rules to known facts, effectively navigating through decision trees to reach a conclusion. For instance, in expert systems, symbolic AI can analyze a set of conditions and apply its rule-based logic to diagnose problems or suggest solutions. This approach ensures that decisions are not only consistent but also explainable, as each step in the reasoning process can be traced back to specific rules and knowledge representations used by the system.

Study natural language processing in the context of symbolic AI for understanding and generating human language.

Studying natural language processing (NLP) within the context of symbolic AI offers valuable insights into understanding and generating human language. Symbolic AI, with its focus on rule-based systems and knowledge representation, provides a structured approach to tackling the complexities of language. By leveraging symbolic methods, NLP can benefit from explicit grammar rules, semantic networks, and ontologies that capture the intricacies of syntax and meaning. This approach allows for more precise language interpretation and generation, enabling machines to better mimic human-like understanding. Moreover, integrating symbolic AI with modern data-driven techniques can enhance NLP applications by combining the strengths of logical reasoning with adaptive learning capabilities. This fusion holds promise for creating more sophisticated systems capable of nuanced language processing tasks such as translation, sentiment analysis, and conversational agents.

Be aware of the limitations of symbolic AI, such as scalability issues with complex domains.

When working with symbolic AI, it’s crucial to recognize its limitations, particularly regarding scalability in complex domains. Symbolic AI systems rely on predefined rules and logic to process information, which can become cumbersome and inefficient as the complexity of the domain increases. As the number of variables and possible interactions grows, maintaining and updating the rule sets can become increasingly difficult, leading to performance bottlenecks. Additionally, symbolic AI struggles with handling ambiguous or uncertain data, which is often present in real-world applications. Understanding these limitations is essential for effectively integrating symbolic AI into broader AI strategies and ensuring that it complements rather than hinders overall system performance.

Stay updated on advancements in hybrid approaches that combine symbolic AI with other AI techniques like machine learning.

Staying updated on advancements in hybrid approaches that combine symbolic AI with other AI techniques, such as machine learning, is essential for anyone involved in the field of artificial intelligence. These hybrid models aim to leverage the strengths of both symbolic reasoning and data-driven learning to create more powerful and versatile AI systems. By integrating the structured, rule-based logic of symbolic AI with the adaptive capabilities of machine learning, researchers are developing systems that can not only reason and make decisions based on explicit knowledge but also learn from vast amounts of data. This combination enhances the ability to tackle complex problems across various domains, offering solutions that are both interpretable and capable of handling uncertainty. Keeping abreast of these developments can provide valuable insights into future trends and applications in AI technology.